Human-in-the-Loop Approaches in Video Annotation Workflows

Artificial intelligence and computer vision technologies rely heavily on well-annotated data to achieve reliable performance. Among different data preparation techniques, video annotation plays a critical role because it enables machine learning models to interpret objects, motion, and interactions across frames. However, video data is highly complex and dynamic, making fully automated annotation unreliable in many real-world scenarios.

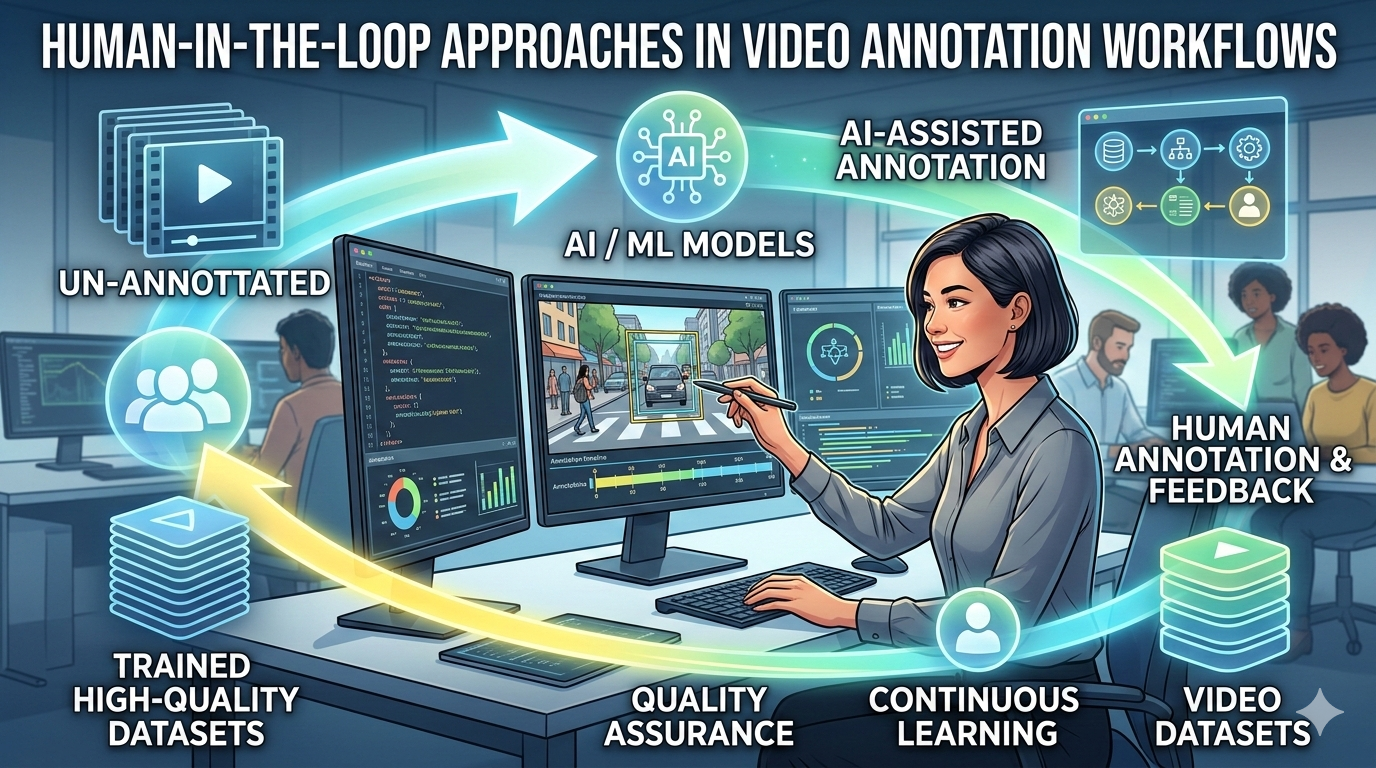

To overcome these limitations, many organizations adopt Human-in-the-Loop (HITL) approaches in their annotation pipelines. HITL combines the speed of automated tools with the contextual understanding and decision-making ability of human annotators. By integrating human oversight into annotation workflows, organizations can significantly improve the quality, accuracy, and reliability of training datasets.

At Annotera, we specialize in providing scalable data annotation solutions that combine advanced AI-assisted tools with skilled human annotators. As a trusted data annotation company and video annotation company, we deliver high-quality labeled datasets through efficient human-in-the-loop workflows that power modern AI systems.

Understanding Human-in-the-Loop in Video Annotation

Human-in-the-Loop (HITL) refers to a collaborative machine learning approach where human expertise is integrated into different stages of the AI lifecycle, including data preparation, model training, and evaluation. The goal is to enhance the accuracy, adaptability, and reliability of machine learning systems.

In video annotation workflows, HITL means combining automated annotation tools with manual verification and correction by human annotators. Automated systems may initially label objects, events, or actions in video frames, while trained professionals review the outputs, correct errors, and ensure consistency.

This hybrid approach is particularly important for computer vision applications because videos contain temporal relationships, movement patterns, and complex environments that machines may misinterpret without human judgment.

Why Human-in-the-Loop Is Essential for Video Annotation

1. Improving Annotation Accuracy

Automated systems can rapidly process large datasets, but they often struggle with ambiguous scenes, occlusions, or unusual object interactions. Human reviewers help identify such issues and refine annotations.

Human expertise allows annotators to interpret context, understand subtle visual cues, and correct labeling errors that automated models may produce. Incorporating human oversight significantly reduces annotation errors and improves the overall quality of training data.

For AI models trained on video datasets—such as autonomous vehicles, surveillance systems, or sports analytics—accurate annotations are critical for reliable predictions.

2. Handling Edge Cases and Complex Scenarios

Real-world video data frequently contains edge cases, including unusual lighting conditions, rare object classes, or unpredictable behaviors. AI models trained only on automated labels may struggle to recognize these scenarios.

Human annotators play a crucial role in identifying such anomalies and ensuring that these cases are correctly labeled. By addressing edge cases during annotation, HITL workflows help create more robust machine learning models capable of handling real-world variability.

This capability is especially important in safety-critical applications such as autonomous driving and medical imaging.

3. Continuous Model Improvement Through Feedback

Human-in-the-loop annotation workflows create a continuous learning cycle. Annotators review model predictions, correct inaccuracies, and feed the improved labels back into the training dataset.

This iterative feedback mechanism allows machine learning models to progressively improve their performance over time. Human feedback ensures that algorithms learn from their mistakes and adapt to new scenarios more effectively.

For organizations leveraging video annotation outsourcing, this continuous improvement process ensures that models evolve alongside changing data patterns.

4. Reducing Bias and Increasing Dataset Quality

Bias in training datasets can significantly impact AI model performance and fairness. Automated labeling tools may unintentionally introduce biases due to incomplete data or algorithmic limitations.

Human reviewers can identify biased patterns and correct them during annotation. By incorporating diverse human perspectives into the labeling process, HITL workflows help ensure balanced and representative datasets.

High-quality annotations are essential for developing trustworthy AI systems that operate reliably across different environments and demographics.

Key Components of Human-in-the-Loop Video Annotation Workflows

Successful HITL workflows involve several interconnected steps that combine automation with human expertise.

Automated Pre-Annotation

Modern annotation tools often use AI models to generate initial labels automatically. These models may identify objects, track movement across frames, or detect events in videos.

Automated pre-annotation significantly reduces the time required for manual labeling while providing a starting point for human reviewers.

Human Validation and Correction

Once automated annotations are generated, human annotators review them carefully. They verify object boundaries, correct classification errors, and ensure temporal consistency across frames.

Human validation is critical for maintaining dataset quality, particularly for tasks like object tracking, polygon annotation, and semantic segmentation.

Active Learning Integration

Active learning is another important component of HITL workflows. In this approach, machine learning models identify uncertain or difficult samples and request human input specifically for those cases.

By focusing human effort on the most challenging data points, organizations can improve model accuracy while minimizing annotation costs.

Iterative Model Retraining

After human corrections are applied, the updated annotations are fed back into the training pipeline. The machine learning model is retrained using these improved datasets, enabling it to produce more accurate predictions in subsequent iterations.

This cycle of automation → human review → retraining forms the foundation of effective human-in-the-loop systems.

Applications of Human-in-the-Loop Video Annotation

Human-in-the-loop video annotation is widely used across industries where accurate visual data interpretation is essential.

Autonomous Driving

Self-driving vehicles rely on annotated video datasets to detect pedestrians, vehicles, traffic signs, and road conditions. Human oversight ensures accurate labeling of complex road scenarios.

Smart Surveillance

Security systems use annotated video footage to detect suspicious behavior, track individuals, and analyze crowd movement patterns.

Retail Analytics

Retail organizations use video annotation to analyze customer behavior, optimize store layouts, and improve operational efficiency.

Healthcare and Medical Imaging

Medical researchers rely on annotated videos for surgical analysis, diagnostic imaging, and patient monitoring.

In each of these industries, combining automation with human expertise ensures reliable AI performance.

Advantages of Video Annotation Outsourcing with HITL

For organizations building AI systems, managing large annotation projects internally can be expensive and time-consuming. This is why many companies rely on video annotation outsourcing to specialized providers.

Partnering with an experienced data annotation company offers several advantages:

-

Access to trained annotators and domain experts

-

Scalable workflows for large datasets

-

Quality assurance and multi-layer validation

-

Faster project turnaround times

A professional video annotation company like Annotera combines advanced annotation tools with structured HITL processes to deliver high-quality labeled datasets at scale.

Annotera’s Human-in-the-Loop Annotation Approach

At Annotera, we follow a structured human-in-the-loop framework designed to maximize accuracy, efficiency, and scalability.

Our workflow includes:

-

AI-assisted pre-annotation to accelerate labeling

-

Multi-stage human review for quality assurance

-

Domain-specific annotation guidelines

-

Continuous feedback loops for model improvement

By combining technology with skilled human expertise, Annotera helps organizations build high-performance AI models while reducing annotation complexity.

Whether businesses require large-scale video labeling or specialized computer vision datasets, our data annotation outsourcing services provide reliable solutions tailored to their needs.

The Future of Human-in-the-Loop Video Annotation

As AI technologies continue to evolve, the demand for high-quality training data will only increase. Fully automated annotation systems still struggle with complex real-world scenarios, making human oversight indispensable.

Future annotation workflows will likely combine advanced AI pre-annotation, active learning, and human validation to create more efficient and scalable data pipelines.

Organizations that adopt human-in-the-loop approaches will be better positioned to build accurate, reliable, and ethically responsible AI systems.

Conclusion

Human-in-the-loop approaches have become a cornerstone of modern video annotation workflows. By integrating human expertise with automated tools, HITL systems ensure high-quality datasets that power accurate machine learning models.

From handling edge cases to reducing bias and improving model performance, human involvement remains essential for successful AI development.

As a leading video annotation company, Annotera leverages human-in-the-loop methodologies to deliver precise, scalable, and reliable annotation services. Through expert data annotation outsourcing and advanced workflows, we help organizations transform raw video data into powerful training datasets that drive next-generation AI innovation.

- Art

- Causes

- Crafts

- Dance

- Drinks

- Film

- Fitness

- Food

- গেমস

- Gardening

- Health

- Home

- Literature

- Music

- Networking

- Other

- Party

- Religion

- Shopping

- Sports

- Theater

- Wellness